You’re online every day, but have you ever wondered how the data on websites is gathered and analyzed? That’s where web scraping comes in. This guide will break down everything you need to know, from how it works to the tools you can use. Let’s dive in!

What is Web Scraping

Web scraping is a technique that automates the process of collecting data from websites. Unlike manually copying and pasting information, web scraping uses software to fetch web pages and extract the data you need. This can be particularly useful for gathering large volumes of data quickly and efficiently. From market research to competitor analysis, the use cases are vast.

The Process of Web Scraping

Before diving into the different tools, it’s crucial to understand the basic steps involved in web scraping. Think of it as a roadmap that guides you through the entire process, from choosing the website to storing the data. Here’s how it usually goes:

- Target Website: First, you identify the website you want to scrape. It could be anything from a real estate listing site to a social media platform.

- Data Identification: Next, you figure out what data you need. Are you looking for product prices, reviews, or maybe even full pages?

- Request and Fetch: The scraper sends a request to the website’s server. If all goes well, the server sends back the data, usually in HTML format.

- Data Extraction: Now it’s time to pull out the data you need from the HTML. This is where coding skills or web scraping tools often come in handy.

- Data Storage: Finally, the scraped data is stored in a database or a file so you can analyze it later.

Behind the Scenes: Technologies Involved

Understanding web scraping also means knowing a bit about the tech that makes it possible.

- HTTP Protocol: This is the set of rules that govern how data is transferred over the web. When a scraper sends a request, it’s using HTTP.

- HTML and CSS: These are the building blocks of web pages. HTML provides the structure, and CSS styles it. Knowing these helps in identifying what data to scrape.

- Programming Languages: Languages like Python and JavaScript are often used to write the scraping code. Libraries like BeautifulSoup and Scrapy can make this even easier.

- Databases: Once you have the data, you’ll often use a database like SQL to store it for easy access and analysis.

Now, after we’ve learned the process of web scraping and all the cool stuff that happens behind the scenes, let’s move on to the different types of web scrapers.

Types of Web Scrapers

Web scraping tools come in all shapes and sizes. Knowing your options can help you choose the right one for your specific needs. Let’s look at some key considerations.

Pre-built vs Custom-built

When it comes to web scrapers, you’ve got two main routes: pre-built solutions and custom-built ones. Understanding the pros and cons of each can be the key to choosing a scraper that fits your project and skill level perfectly.

Pre-built Web Scrapers

Pros:

- Easy to Use: Ideal for beginners.

- Less Technical Know-How Needed: low-code or no-code solutions.

- Quick setup: Get started almost immediately.

- Community Support: Popular solutions have forums and guides.

Cons:

- Limited Customization: What you see is what you get.

- Might Not Suit Specific Needs: Not ideal for complex tasks.

- May lack Advanced Features: Can be basic in functionality.

- Potential Cost: Free versions may have limitations.

Custom-built Web Scrapers

Pros:

- Highly Customizable: Tailor it to fit your exact needs.

- Scalable: Built to handle small to large projects.

- Advanced Features: Can include anything you’re willing to code.

- Complete Control: You own the code and the data.

Cons:

- Requires Coding Skills: Not for the non-programmer.

- Time-Consuming: Development takes time and effort.

- No Community Support: You’re on your own for troubleshooting.

- Maintenance Required: Code may need updates and fixes.

Below, you can view a head-to-head comparison of pre-built and custom-built scrapers.

| Criteria | Pre-built Web Scrapers | Custom-built Web Scrapers |

|---|---|---|

| Ease of Use | High | Low |

| Customization | Low | High |

| Technical Skills | Low | High |

| Time Investment | Low | High |

| Cost | Variable | Variable |

| Community Support | Usually High | Low |

| Advanced Features | Limited | Almost Unlimited |

Choosing between a pre-built and custom-built web scraper really depends on your needs and skills. If you’re a beginner or need a quick solution, pre-built scrapers are easy and fast. For those looking for more control and customization, a custom-built option is the way to go. Consider your project’s requirements and your own comfort level to make the best choice.

Desktop Software vs Browser Extensions

Choosing between desktop software and browser extensions for web scraping is like choosing between a Swiss Army knife and a pocket knife. Both have their uses, but one might be a better fit for your specific needs. Let’s dig into the pros and cons.

Desktop Software

Pros:

- More Powerful: Built for heavy-duty scraping.

- Better For Large-Scale Scraping: Can handle bigger projects.

- Advanced Features: Offers more functionalities.

- Greater Stability: Less likely to crash during big jobs.

Cons:

- Requires Installation: You’ll need to download and set it up.

- Usually Pricier: Most good ones aren’t free.

- Steeper Learning Curve: Might take time to master.

- Resource-Intensive: May slow down your computer.

Browser Extensions

Pros:

- Easy to Install: Just a click and you’re set.

- Simpler Interface: User-friendly for beginners.

- Usually Free: Most basic versions cost nothing.

- Quick for Small Tasks: Ideal for one-off projects.

Cons:

- Less Powerful: Not built for heavy lifting.

- Not Ideal for Large Data Sets: May slow down or crash.

- Limited Features: What you see is often what you get.

- Browser-Dependent: Tied to your web browser’s performance.

| Criteria | Desktop Software | Browser Extensions |

|---|---|---|

| Power | High | Low |

| Ease of Install | Low | High |

| Cost | Variable | Usually Free |

| Scale | Large | Small to Medium |

| Advanced Features | Yes | Limited |

| Learning Curve | Steeper | Easier |

| System Resources | Higher Usage | Lower Usage |

Choosing between desktop software and browser extensions for web scraping? Desktop software is more powerful but requires more setup and resources. It’s ideal for big, complex projects. Browser extensions are easier to use and usually free, making them great for quick, smaller tasks. Pick the one that fits your project’s size and needs best.

Cloud-Based vs Local Scrapers

The final piece of the puzzle when choosing a web scraping tool is deciding where the actual scraping will occur: on a cloud-based platform or your local machine. Knowing the pros and cons of each will help you make an informed decision for your specific scraping needs.

Cloud-Based Scrapers

Pros:

- Accessible From Anywhere: Log in and scrape from any device.

- Powerful: Built to handle heavy-duty tasks.

- Scalable: Easily expand your operations.

- Lower Resource Usage: Doesn’t eat up your local storage or CPU.

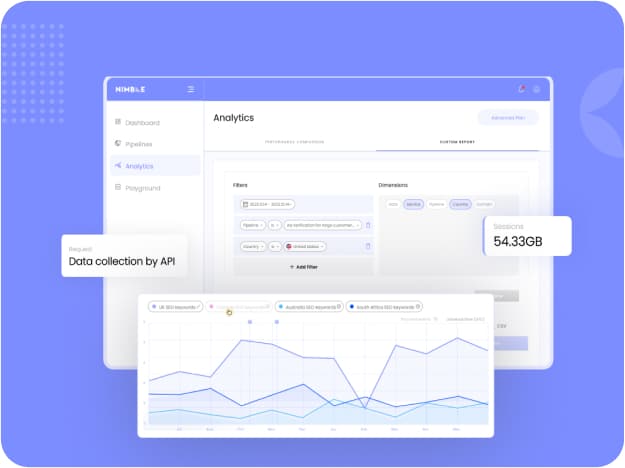

- Nimble’s Advanced APIs: For example, cloud-based solutions like Nimble offer APIs that let you scrape unlimited amounts of data effortlessly.

Cons:

- Monthly Fees: Most services have recurring costs.

- Dependent on Internet Connection: No internet, no scraping.

- Less Control: Your data is stored on external servers.

- Complexity: May require a learning curve to understand cloud-based settings.

Local Scrapers

Pros:

- No Ongoing Fees: Usually a one-time purchase or even free.

- Works Offline: Internet outage won’t stop your scraping.

- Complete Control: You decide where your data is stored.

- Direct Data Transfer: No need to download data from the cloud.

Cons:

- Takes up Local Resources: Your computer does all the work.

- Less Powerful: May struggle with large-scale scraping.

- Maintenance: You’re responsible for updates and troubleshooting.

- Limited Accessibility: Can only access from the machine where it’s installed.

| Criteria | Cloud-Based | Local Scrapers |

|---|---|---|

| Accessibility | High | Low |

| Cost | Monthly Fees | Usually One-time Cost |

| Resource Usage | Low | High |

| Internet Needed | Yes | No |

| Control | Lower | Higher |

| Scalability | High | Variable |

Deciding between cloud-based and local scrapers? Cloud-based options are great for heavy-duty tasks and are accessible anywhere, but they cost more and need a stable internet connection. Local scrapers are more budget-friendly and work offline, but they use more of your computer’s resources. Pick the one that aligns best with your project’s needs.

User Interface Choices

The user interface of a web scraper can drastically affect your experience and efficiency. You’ll generally come across two main types: Graphic User Interfaces (GUI) and code-based interfaces. Your choice will hinge on several factors including your coding skills, project complexity, and the level of control you want over the scraping process.

Graphic User Interface (GUI) Scrapers

Pros:

- User-friendly: Intuitive design makes it easy to navigate.

- Quick to start: Little to no learning curve for most users.

- Visual cues: Helpful for identifying what data to scrape.

- Less technical: No need for programming knowledge.

Cons:

- Limited customization: Built-in features may not cover all your needs.

- Less control: The GUI dictates what you can and cannot do.

- Potential cost: Advanced features may come at a price.

Code-based Scrapers

Pros:

- High customization: If you can code it, you can do it.

- More control: Fine-tune your scraper exactly how you want.

- Scalable: Easily adapt the code for different projects.

- Cost-effective: Many code-based tools are open-source and free.

Cons:

- Steeper learning curve: Requires coding knowledge.

- Time-consuming: Writing and debugging code takes time.

- Less intuitive: No visual elements to guide you.

- Maintenance: Code updates may be needed as websites change.

Choosing between a GUI and a code-based interface often boils down to a trade-off between ease of use and control. If you’re new to scraping or have limited coding skills, a GUI-based scraper can be a great starting point. On the other hand, if you’re comfortable with coding and need a more tailored solution, code-based scrapers offer the flexibility and control you might be seeking.

Ethical and Legal Considerations

Navigating the legal landscape of web scraping can be tricky. Understanding the laws and best practices not only keeps you on the right side of the law but also ensures you’re scraping responsibly.

Is Web Scraping Legal?

The legality of web scraping is a grey area and often depends on how you’re doing it and what you’re doing with the data.

Pros of Legal Scraping

- Access to public data: If it’s publicly available, it’s usually fair game.

- Non-intrusive methods: As long as you’re not overloading a server.

Cons of Illegal Scraping:

- Violating terms of service: Some websites prohibit scraping beyond a login in their TOS.

- Copyright infringement: Scraping and then using copyrighted material can lead to legal issues.

- Data privacy: Storing personal data without consent is a big no-no.

Navigating the legal landscape of web scraping can be tricky. If you stick to public data and avoid overloading servers, you’re generally in the clear. But crossing the line into scraping behind logins, lifting copyrighted material, or storing personal data can land you in hot water. Always check a website’s terms of service and consider the ethical implications before diving in. Make sure you’re informed to keep your web scraping activities on the right side of the law.

Responsible Web Scraping: Best Practices

Being legal doesn’t always mean you’re ethical. Here are some best practices to follow for responsible web scraping:

- Rate Limiting: Don’t overload a website’s server; respect their rate limits.

- User-Agent String: Always provide a User-Agent string to identify your scraper.

- Follow Robots.txt: This file tells you what the website allows or disallows for scraping.

- Attribute Data: If you’re publishing the scraped data, giving credit is both ethical and appreciated.

- Be Transparent: If possible, let the website know you’re scraping and for what purpose.

By adhering to these guidelines, you not only minimize the risk of legal repercussions but also contribute to a more ethical web scraping community.

Real-World Use Cases: What is Web Scraping Good For?

Web scraping isn’t just a technical term; it has real-world applications that can transform businesses and industries. Let’s look at some of the key areas where web scraping is making a significant impact.

1. Real Estate: Scraping Listings

In the dynamic world of real estate, having up-to-date and comprehensive information is often the key to making successful transactions. Web scraping, especially when aided by residential proxies for more reliable data access, serves as an invaluable tool in gathering this essential data. Here’s how:

- Collect Property Listings from Various Platforms: By scraping data from multiple online platforms, including MLS databases and classified websites, you can save time and have a more complete view of the market at your fingertips.

- Analyze Housing Prices and Trends: Web scraping allows you to pull data on recent sales, asking prices, and rental rates. This information helps professionals identify investment opportunities and price properties more effectively.

- Provide More Accurate Valuations for Properties: The data you gather can be used for precise property valuations, benefiting realtors who need to set realistic selling prices and investors looking to understand the value of a potential investment.

This important information is not just useful for industry professionals like realtors and investors, but also invaluable for homebuyers seeking to better understand the value of their prospective homes. There are many successful businesses in the real estate world that heavily rely on scraping real estate data online.

2. Market Research: Industry Stats and Insights

In today’s competitive business landscape, having a finger on the pulse of the market is essential for staying ahead. Web scraping provides an efficient way to gather this crucial intelligence. Here’s what it can do:

- Gathering Stats on Competitors: Web scraping enables businesses to collect a wide range of metrics about their competitors, from pricing and product range to marketing strategies. This helps in identifying gaps in the market and opportunities for differentiation.

- Analyzing Customer Reviews and Sentiments: By scraping customer reviews from various platforms, businesses can gain a clearer understanding of consumer sentiments and needs. This information is invaluable for improving products and services.

- Monitoring Industry Trends and News: Staying updated on industry news and trends is easier than ever with web scraping. From market reports to news articles, you can gather data to anticipate market shifts and opportunities.

Armed with these insights, companies are better positioned to make strategic decisions, from product development to marketing and beyond.

3. E-commerce: Price Comparison

In the fiercely competitive e-commerce landscape, staying ahead involves a lot more than just having a great product. Price often becomes a decisive factor for consumers. Web scraping serves as a powerful tool for both e-commerce businesses and shoppers in the following ways:

- Monitoring of Competitor Pricing: Web scraping enables businesses to keep tabs on their competitors’ pricing strategies in real-time. This data can help in positioning your own products more competitively in the market.

- Dynamic Pricing Strategies for Your Own Products: With the data you’ve gathered, you can implement dynamic pricing models that adjust based on supply, demand, and competitor pricing. This maximizes both sales and profits.

- Consumers to Find the Best Deals Across Platforms: For shoppers, web scraping tools can aggregate prices from multiple online stores, helping them find the best deal for the products they are looking for.

In essence, web scraping creates a win-win scenario. Businesses can set more competitive prices while consumers can shop smarter and more economically.

4. Business: Lead Generation

In the realm of business, one of the most pressing challenges is finding new clients or customers. This often involves hours of manual research, but web scraping can streamline this process in several impactful ways:

- Extracting Contact Details from Professional Sites: Websites like LinkedIn or industry-specific directories are gold mines for potential business leads. Web scraping can automate the extraction of contact details such as email addresses, phone numbers, and company names, saving you hours of manual data entry.

- Scraping Data from Forums and Social Platforms: Online communities are often where your potential clients discuss needs, problems, and solutions. Web scraping tools can collect this data, helping you understand what potential leads are looking for and how to approach them.

- Enabling More Targeted and Effective Marketing Campaigns: With the rich data sets generated by web scraping, businesses can create highly targeted marketing campaigns. Whether it’s email marketing or social media ads, you can tailor your messages to specific audiences, increasing engagement and conversion rates.

By leveraging web scraping for lead generation, businesses can not only expand their client base but also improve the quality of the leads they engage with.

Final Words

Web scraping is revolutionizing industries by providing critical data and insights. While ethical concerns remain, they’re navigable with smart practices. As the landscape evolves, advanced tools like Nimble’s AI-powered, cloud-based scrapers are setting new standards in efficient and ethical data collection. In today’s data-centric world, understanding web scraping is indispensable.

Answers to frequently asked questions

It’s the practice of extracting data from websites for analysis and insights.

The legality of web scraping is a grey area. It’s mostly okay if you’re scraping public data and not overloading servers. Always check a website’s terms of service to be sure.

Not necessarily. There are pre-built web scraping tools that require no coding. But if you need customized data or want more control, some coding knowledge is helpful.

Web scraping is used in various fields like market research, data analysis, and lead generation. It’s also common in sectors like real estate, finance, and e-commerce for gathering data.

Get the latest

from Nimble

Most popular articles

AI-Powered SEO and SEM Monitoring with the Nimble Platform

Nitzan Yeshanov |

September 9, 2023 5 min read

The Web Scraping Landscape & Predictions for 2024

Nimble's Expert |

January 16, 2024 4 min read